Translation Management for the AI Era

Translation management systems were designed for human translators. Upload strings, assign reviewers, wait for approval. Your workflow has moved on. Your translation management should too.

Your stack evolved. Your translation workflow didn't.

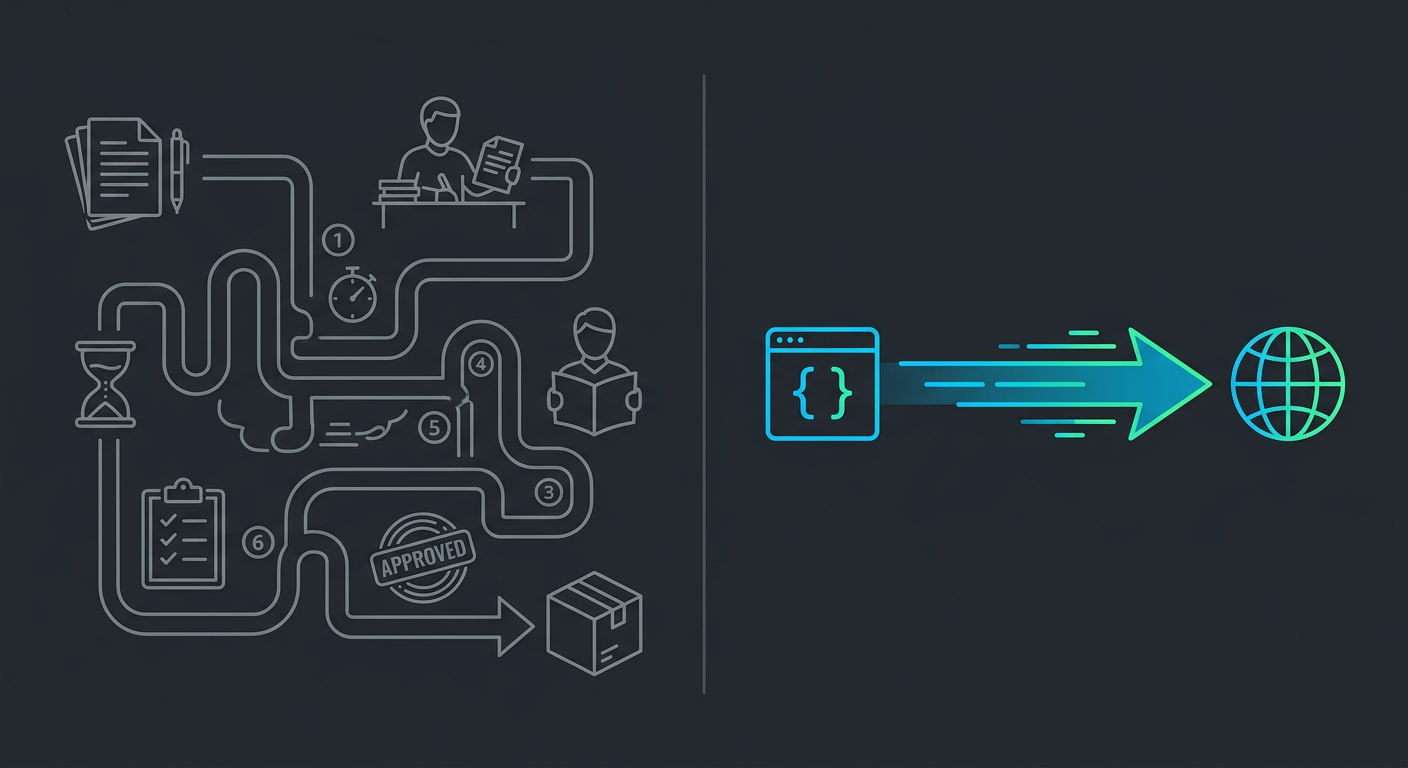

You deploy 50 times a day. Your AI agents write code, run tests, ship features. But when it comes to translations? You're still exporting strings to a dashboard, assigning them to reviewers, and waiting days for approval.

The bottleneck isn't translation quality. It's the workflow around it.

TMS platforms added AI. They didn't become AI.

Traditional TMS bolted AI suggestions onto a workflow designed for human translators. The human is still in the loop: reviewing, approving, managing.

AI suggests → human reviews → human approves → strings exported → merged to codebase

AI agent calls API → localized files written → done

Dashboard for project managers to track translator progress

MCP protocol for AI agents to localize autonomously

Weeks of onboarding, connector setup, workflow configuration

One CLI command. Works in your IDE.

TMS doesn't translate. It manages translators.

Here's what most people miss: a TMS doesn't actually translate anything. It routes strings to machine translation providers or human reviewers. The quality comes from somewhere else. You're paying for the middleman.

i18n Agent's quality pipeline

Multi-model AI translation (GPT-4, Claude, specialized models)

Context analysis and technical term verification

Cultural and regional adaptation

Quality scored and validated before delivery

Sends strings to basic MT provider → flags low-confidence → queues for human review → waits → approved

Multi-model AI pipeline → context analysis → technical term verification → cultural adaptation → quality scored → delivered

QA = a human reviewer catches errors after translation

QA = multi-step validation pipeline catches errors before delivery. Every translation gets a quality score.

i18n Agent runs each translation through multiple specialized AI models. One for technical accuracy, one for cultural context, one for consistency validation. Like a team of specialist translators, not one generalist.

i18n Agent wasn't built for translators. It was built for AI agents.

When your AI agent needs to localize a file, it doesn't open a dashboard. It calls i18n Agent through MCP, the same protocol it uses for everything else.

Regional sensitivity

Not just language, but cultural adaptation

File-level integration

Works with your JSON, YAML, Markdown, PO files directly

No human-in-the-loop overhead

AI agent handles the full workflow

Works where you work

Claude Code, Cursor, VS Code, any MCP-compatible tool

One integration. Every language.

Install

Install the MCP server (one command)

Translate

Your AI agent calls translate_file or translate_text

Done

Localized files appear in your project. Structure preserved, keys intact.

No dashboard. No approval chain. No project manager. Just translated files.

Pay for translations. Not for a platform.

TMS platforms charge €200–€1,000+/month, whether you translate one word or a million. Onboarding fees. Seat limits. Connector add-ons. Annual contracts.

i18n Agent charges €0.01 per word. That's it.

€200–€1,000+/month base + per-word MT costs + human reviewer costs

€0/month. Pay €0.01/word only when you translate.

A dashboard, a workflow engine, connector plugins, seat licenses

Native-level translations. Quality you'd expect from a human linguist, delivered in seconds.

Frequently Asked Questions

Enterprise team?

Still stuck on a TMS contract? Let's talk about migrating.